CATAPULT PRO VIDEO

NEW STANDARD IN PERFORMANCE ANALYSIS SOFTWARE

Sports Video Analysis and Performance Analysis Software: Uncover performance insights like never before with faster workflows and better insights, and more.

CATAPULT PRO VIDEO

NEW STANDARD IN PERFORMANCE ANALYSIS SOFTWARE

Sports Video Analysis and Performance Analysis Software: Uncover performance insights like never before with efficient workflows, integrated datasets, and more.

FASTER VIDEO ANALYSIS WORKFLOWS + BETTER SPORTS PERFORMANCE INSIGHTS

OUTFIT YOUR TEAM WITH ONE VIDEO ANALYSIS PLATFORM THAT DELIVERS CLEARER INSIGHTS IN LESS TIME.

-

INTEGRATE EVERY DATASET TO VIDEO

-

REMOTE & LIVE COLLABORATION

-

EMBEDDED PRESENTATION TOOLS

-

NO-CODING REQUIRED

-

WIRELESS LIVE STREAMING

FOCUS

Capture multi-angle video + data during live games & practices.

MATCHTRACKER

Integrate & visualize every dataset across multiple sessions.

HUB

Share & collaborate insights across every user from any location.

Capture Every Moment

Every dataset, video angle, and insight is accessible during live sessions, in post, or during training sessions.

- Capture, syncronize, and livestream multi-angle video on any device, from any location.

- Integrate athlete performance data to video uncover new context to team & player performance.

- Connect any dataset including a vareity of 3rd Party providers.

- Automate data capture with logic-based templates during live sessions.

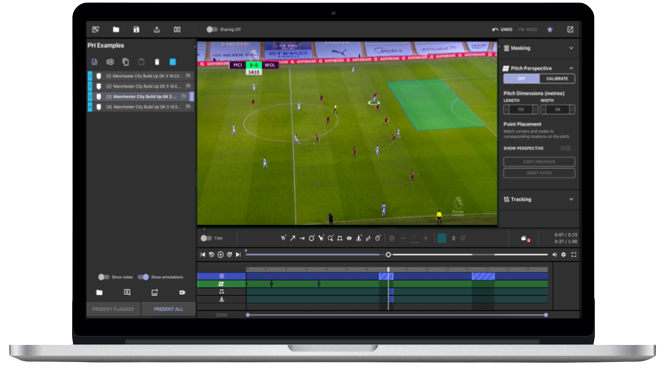

STREAMLINED ANALYSIS WORKFLOWS

Save time with no-code analysis workflows. Access powerful visualizations that integrate to video across every performance dataset.

- View insights with custom workbook views with preset filters.

- Filter & sort data across every game, multiple sessions, or entire seasons.

- Access a variety of graphs and visualizations including 2D pitch, graphs, and more.

- Collect & share insights instantly to your entire team.

CREATE Masterful Presentations

Access a suite of presentation tools included in the Pro Video Platform & accessible in each software.

- Add video collections to presentations.

- Automatically annotate player movements

- Overlay text, images, audio, and a variety of visualizations.

SHARE EVERYTHING

Every analyst, coach, and athlete now has access to every performance insight from any location or device.

- Share content, insights, and presentations to anyone from any location from the cloud.

- Customize sharing permissions for every user or group.

- Stream video live from any location on any device.

- Collaborate from any location with remote sessions.

LEARN MORE

GO IN-DEPTH

Download the Brochure to learn more about our Pro Video products.

GET HANDS ON

See how Pro Video works for your team by requesting a demo with our team.

GET THE LATEST INSIGHTS

Discover how teams use Catapult Pro Video to redefine their performance analysis workflows. Discover our Ultimate Guide for Video Analysis.

Tactical Analysis Video Series

Access our series of on-demand videos to learn how to optimize your team's tactical analysis workflows with Pro Video.

5 New Pro Video Features You Need To Know

3 New Time-Saving Presentation Features You Need To Know

How Catapult Pro Video is Redefining Performance Analysis for Elite Sport Teams